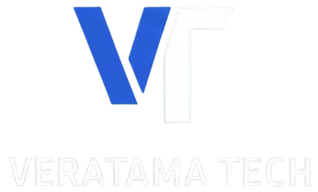

The Era of 1-bit LLMs: All Large Language Models are in 1.58 Bits

As Generative AI continues to push boundaries, one of the biggest challenges facing researchers and developers is the sheer size and complexity of LLMs .

These models, while impressive, demand enormous amounts of memory and computational resources, making them difficult to deploy on everyday devices. This has led to a growing gap between innovation and accessibility, where only those with high-end hardware can experiment with or run these models.

But what if we could drastically reduce the size of these models without sacrificing performance?

Enter 1-bit LLMs — a revolutionary approach that could reshape the future of AI by making large models more efficient, portable, and accessible.The same idea was discussed in the revolutionary paper some months back i.e.

The Era of 1-bit LLMs: All Large Language Models are in 1.58 Bits

Which introduced the world to the idea of 1 bit LLMs i.e. LLMs whose weights are represented by just 1 bit hence a huge memory saver. Today, Microsoft has released the official framework i.e. BitNet.cpp to run these 1 bit LLMs namely BitNet1.58 model in local system using just CPU.

Before jumping onto BitNet.cpp

What are 1-bit LLMs?

1-bit LLMs are a breakthrough in generative AI, designed to tackle the growing challenges of model size and efficiency.

Instead of the usual 32 or 16 bits for storing weight parameters, 1-bit LLMs use just a single bit (0 or 1) per weight, massively cutting down on memory needs.

For example, a 7-billion parameter model that would normally require around 26 GB can be reduced to just 0.815 GB, making it possible to run on smaller devices like mobile phones.

The flagship model in this space, BitNet b1.58, is built to boost performance while consuming fewer resources.

Get a more detailed explanation here:

Coming back to the topic of the hour

BitNet.cpp

Microsoft’s new framework, BitNet.cpp, is at the forefront of this shift, offering a way to run massive language models with minimal resources. Let’s explore how this game-changing technology works and why it’s such a significant leap forward.

bitnet.cpp is the official framework for inference with 1-bit LLMs (e.g., BitNet b1.58 ).

It includes a set of optimized kernels for fast and lossless inference of 1.58-bit models on CPUs , with NPU and GPU support planned for future releases.

The first version of bitnet.cpp focuses on CPU inference .

It delivers speedups of 1.37x to 5.07x on ARM CPUs , with larger models seeing greater gains.

Energy consumption on ARM CPUs is reduced by 55.4% to 70.0% , enhancing efficiency.

On x86 CPUs , speedups range from 2.37x to 6.17x , with energy savings between 71.9% and 82.2% .

bitnet.cpp can run a 100B BitNet b1.58 model on a single CPU, processing at 5–7 tokens per second , similar to human reading speed.

The framework right now supports 3 models, namely:

bitnet b1.58-large: 0.7B parameters

bitnet b1.58–3B: 3.3B parameters

Llama3–8B-1.58–100B-tokens : 8.0B parameters

These models are optimized for efficient inference and are available for use in various applications.

How BitNet.cpp different from Llama.cpp?

Installation

There are a few things which are required and it is not as straightforward as pip install :

- Ensure you have Python 3.9 or later, CMake 3.22 or higher, and Clang 18 or above installed.

- Windows users should install Visual Studio 2022 and activate the following features: Desktop development with C++, C++-CMake Tools, Git for Windows, C++-Clang Compiler, and MS-Build Support for LLVM (Clang).

-

Debian/Ubuntu users can run an automatic installation script using

bash -c "$(wget -O - https://apt.llvm.org/llvm.sh)".

The official framework repo can be explored here :

Some of the 1-bit LLMs can be explored in HuggingFace as well. Like this one :

To be honest, I take this to be the biggest release so far as size of LLMs is becoming a challenge and hence, only a few folks are able to play around and research using LLMs. With incoming of 1-bit LLMs and frameworks like BitNet.cpp, this gap shall decrease and GenAI advancements are more reachable to common folks.

Do try out the BitNet framework

Source URL: https://medium.com/data-science-in-your-pocket/microsoft-bitnet-cpp-framework-for-1-bit-llms-8a7216fe28cb

‘0’ Komentar

Tinggalkan Komentar